Artificial SuperIntelligence (ASI) and the future of humanity. Subjugation or super-powered?

Will Artificial SuperIntelligence (ASI) subjugate or superpower humans? The recent books, Yuval Noah Harari’s Nexus and Ray Kurzweil’s The Singularity is Nearer, provide very different visions on our human future, one that will play out in most of our lifetimes.

The Nexus thesis

Harari looks at the human – AI relationship through a social anthropological lens. I take “nexus” to mean the complex and evolving networks of information that connect humans, societies and technologies, and his thesis is that control over these networks has always meant control over societies.

He believes that AI is fundamentally different from earlier information technologies: it can act autonomously, analyze and manipulate information at scale, and thus threatens to shift control away from humans. Harari is concerned that this could result in both the consolidation of power (through AI-controlled information flows) and the rise of new forms of manipulation and social control.

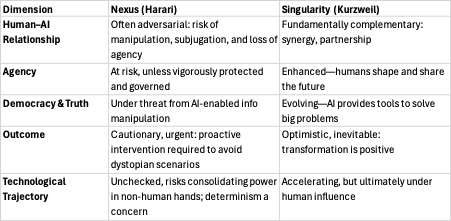

Harari evokes a largely adversarial scenario: he’s skeptical about humans simply collaborating with AI as benign partners. He sees risk that we become subservient to, or manipulated by, increasingly powerful AI networks that escape human governance. The threat isn’t “killer robots,” but the subtle undermining of individual and collective agency, democracy, and even our sense of truth.

Despite this, Harari argues for the retention of human responsibility—urging us to actively shape and govern information technologies before we cede too much agency. The outcome is not preordained, but the risks and power imbalances are stark.

The Singularity thesis

Kurzweil’s thesis is one of integration, that technology (especially AI) is growing exponentially and will soon reach a “singularity”—where machine intelligence surpasses human intelligence, blending with humanity via bio-digital integration (brain–computer interfaces, nanotechnology, etc.)

He sees human-AI convergence, not conflict, and adopts a fundamentally complementary perspective: he sees AI as tools and partners that extend our creativity, cognition, and lifespans.

His vision is not one of humans rendered obsolete, but of humans transcending biological boundaries through technological augmentation

The singularity will be transformative and perhaps unpredictable, but Kurzweil is optimistic. For him, the merging of AI and human intelligence is a positive-sum process—we create the future, and our essence persists in new forms.

Kurzweil acknowledges perils (job displacement, loss of control), but sees these as manageable alongside the vast opportunities.

Harari’s “Nexus” positions AI as a disruptive force that risks becoming adversarial if left unchecked. He prioritizes the defense of human agency, democracy, and truth in a world where information can be weaponized.

Kurzweil’s “The Singularity is Nearer” sees the coming AI explosion as a continuation of humanity’s creative project, where human and machine intelligence seamlessly integrate for mutual benefit.

Both agree that today’s decisions will shape whether AI becomes our adversary or the ultimate complement to humanity—placing responsibility squarely on our collective choices and governance.

I thoroughly recommend both books.

Co-authored by my AI, Perplexity Pro.